BOBCATSSS 2017. LIS students have the power!

Last week, 25-27th February 2017, BOBCATSSS 2017 was held at the University of Tampere, Finland. As every year, LIS students from several European countries (and from USA) shared their knowledge and experiences in conferences and workshops.

The conference began with an opening ceremony where the Moomins were the peak of several performances. Next, Carol Tenopir, professor at the University of Tennessee, Knoxville, presented “Researches need information tool: how information improves the quality of workfile” about reading habits and values in Finland. After the lunchtime, the conference was completed with paper sessions and workshops, some of them very interesting. The social program of this first day offered to attendants a very wide range of centers to visit. I chose the Lenin Muesum, the first museum dedicated to Lenin outside the Soviet Union that remember the meeting that Lenin and Stalin held in Tampere to organize the Soviet Revolution 1917. Very interesting…

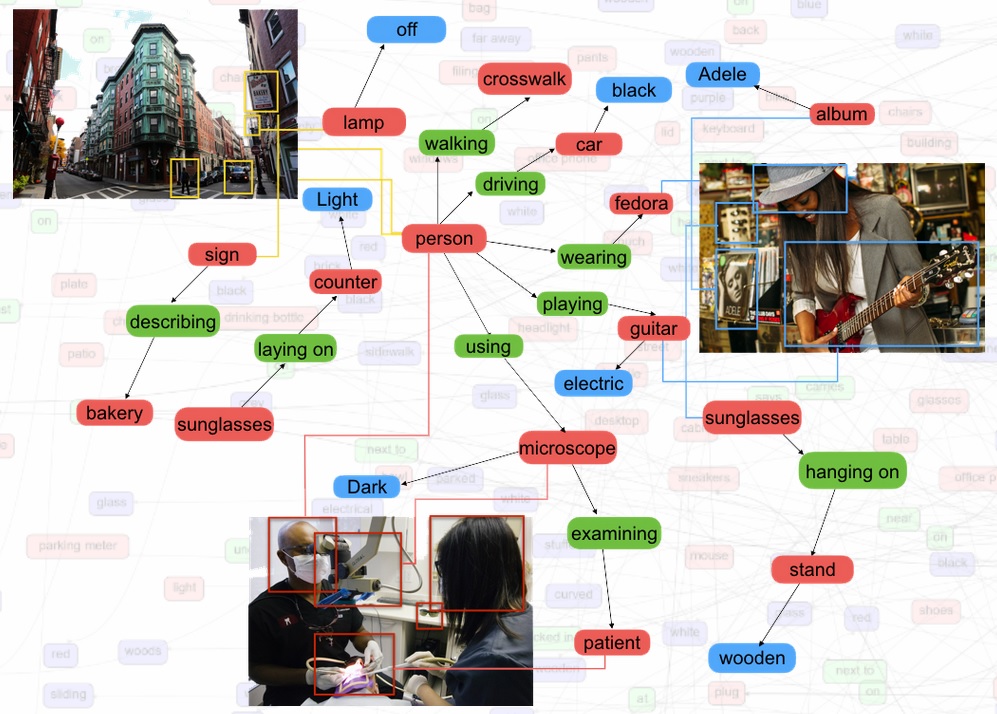

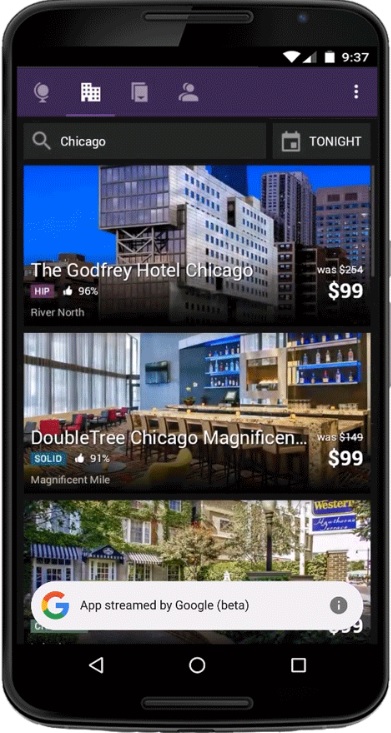

The second day was Guus van den Brekel (University Medical Centre Groningen) who opened the conference with his speech “Interactive media: about Information and libraries” about challenges that libraries have to face in a more and more interactive media world. In addition to papers, workshops, meetings, Thursday was the day of the Evening Party! Great music and dance floor at Kubli Club.

The last day was the turn of Josie Billington, from the University of Liverpool, who showed us the power of the Shared Reading to influence mental health and wellbeing. During the closing ceremony, BOBCATSSS 2017 organizers gave the flag to BOBCATSSS 2018 organizers, University of Latvia and Eötvös University of Budapest, Hungary. Then, see you to next year in Riga, Latvia!

Enjoy it!

Andreu Sulé

University of Barcelona